Trusted by Millions

Save up to 58%*

Get easy-to-use, easy-to-install antivirus protection against advanced online threats. Plus online privacy and ID theft protection with select plans.

Get easy-to-use, easy-to-install antivirus protection against advanced online threats. Plus online privacy and ID theft protection with select plans.

For the first year. Savings compared to the renewal price.

Offer details below.*

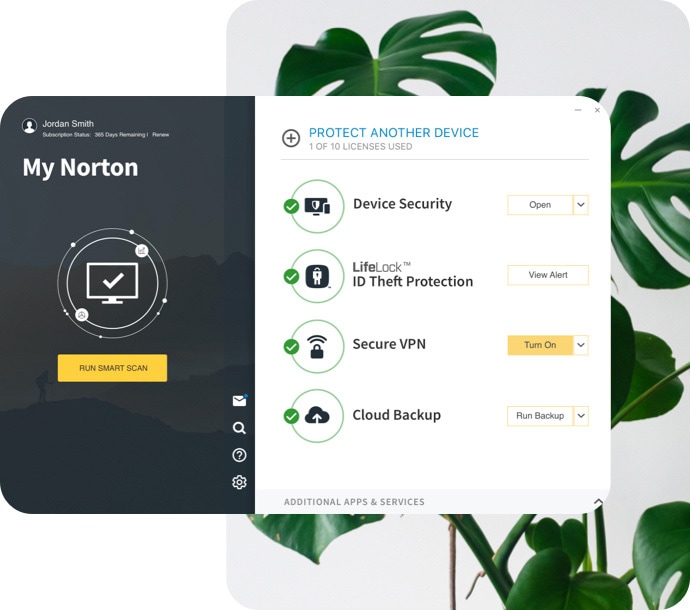

Norton™ 360 with LifeLock™ Plans

Devices + online privacy + identity protection

Help block hackers from your devices, keep your online activity private and protect your identity, all-in-one. It’s never been easier.

Norton™ 360 Plans

Devices + online privacy protection

Device security helps block hackers and Norton Secure VPN helps you keep your online activity private.

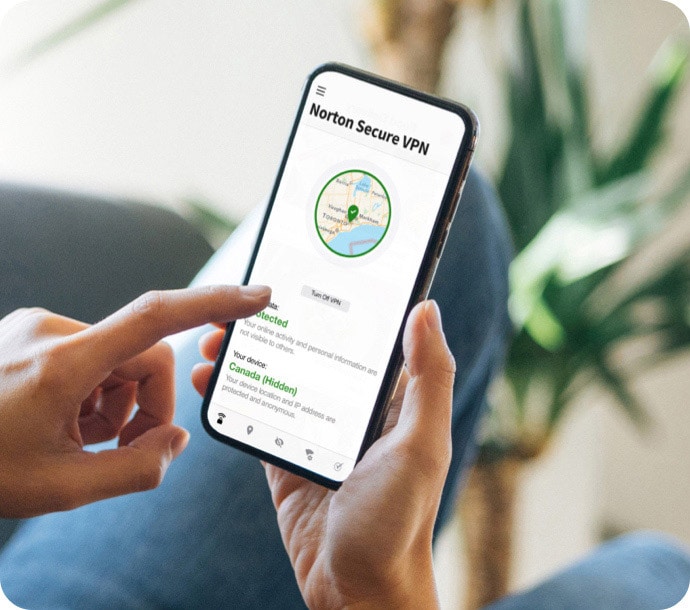

Norton™ Secure VPN Plan

Online privacy protection

Norton Secure VPN helps block hackers from seeing what you do online, over public or even home Wi-Fi.

Norton Small Business

Enjoy peace of mind knowing your business’s devices and customer data are more secure.

Norton technology blocks thousands of threats every minute.

Count on decades of experience and a proven track record of keeping people safer every day.

Get the protection you and your family need in one place.

A trademark of Ziff Davis, LLC. Used under

license. Reprinted with permission. © 2022 Ziff

Davis, LLC. All Rights Reserved. Best of the Year

awarded in 2021.

AAA Rating in Consumer Endpoint Protection

from SE Labs, Jan-Dec 2021.

AV-TEST, “Best Protection and Best Performance”

Norton 360, Jan-Dec 2021.

No one can prevent all cybercrime or identity theft.

* Important Subscription, Pricing and Offer Details:

2 Requires an automatically renewing subscription for a product containing antivirus features. For further terms and conditions please see norton.com/virus-protection-promise.

4 Only available on Windows systems (but not in S mode or on ARM processors).

‡ Monitoring available on Windows™ PC, iOS and Android™ devices. Not all features available on all platforms.

§ Monitoring not available in all countries and varies based on region.

The Norton and LifeLock Brands are part of Gen. LifeLock identity theft protection is not available in all countries.

Copyright © 2024 Gen Digital Inc. All rights reserved. Gen trademarks or registered trademarks are property of Gen Digital Inc. or its affiliates. Firefox is a trademark of Mozilla Foundation. Android, Google Chrome, Google Play and the Google Play logo are trademarks of Google, LLC. Mac, iPhone, iPad, Apple and the Apple logo are trademarks of Apple Inc., registered in the U.S. and other countries. App Store is a service mark of Apple Inc. Alexa and all related logos are trademarks of Amazon.com, Inc. or its affiliates. Microsoft and the Window logo are trademarks of Microsoft Corporation in the U.S. and other countries. The Android robot is reproduced or modified from work created and shared by Google and used according to terms described in the Creative Commons 3.0 Attribution License. Other names may be trademarks of their respective owners.